Those of you that know me well will know I like Linux. Don't get me wrong, there's a lot wrong with it and the community can be a pretty harsh place where more or less everyone has an opinion they think is the right one. I don't get a lot of chance to contribute for various reasons but earlier this year I was (finally) accepted to be a package maintainer for the Fedora project.

Since I like Linux I do tend to use it on the desktop both at home and at work. I do more or less everything with Linux and Open Source software including photo editing with GIMP. It bugged me that Fedora ship a library called lensfun which is a library of lens correction data but the only piece of software using it within Fedora was Digikam. Preferring GIMP I decided to set about packaging and supporting gimp-lensfun for Fedora.

Becoming a Fedora Package Maintainer is a well documented process as there are joining instructions and package review guidelines for the packaging guidelines. Whilst well documented, there's a heck of a lot of stuff you have to read through and comply with before you can submit your first package review request. This is further complicated by not yet having any reputation within the Fedora community and so you have to build this up in other ways before your package will be recognised and one of the senior community members agrees to sponsor you as a package maintainer.

I eventually raised my first package review request for gimp-lensfun in April 2013. Over a period of a few weeks people throw rocks at what you've done according to the various guidelines that you either didn't see or forgot about. This is actually really good and helpful as it ensures quality and consistency between all the new packages being accepted into Fedora.

What I wasn't prepared for was the length of the wait. I know this isn't an earth shattering change to Fedora but it took me over 2 years to get noticed enough for my package to be accepted and for me to be sponsored as a Fedora packager. I think I'd probably still be waiting now but I bugged a few people on IRC to point out my review request had been sitting there for so long.

Finally! My first package was released into the wild for Fedora 21 and 22 and I've been given the chance to give just a little back to the community.

Friday 23 December 2016

Thursday 19 November 2015

Compiling the 8192eu driver for the Raspberry Pi

I recently had the need to Wi-Fi enable a Raspberry Pi and so bought a D-Link DWA-131 Wireless USB Adapter. I knew from something I'd read that it was a bit of a gamble in terms of whether it would be supported by the Pi under Raspian. It turns out there are currently 3 revs of this adapter with different chipsets in each. The one I got was the latest E1 version identified with the USB Device ID 2001:3319 that requires the realtek 8192eu driver.

Here's how to get it working under the September 2015 Raspian Jessie running Kernel 4.1.7+ for which I found some similar instructions a helpful starter:

1. Get up to date and ready for compilation

sudo apt-get update

sudo apt-get upgrade

sudo apt-get install build-essential git

2. Grab the Driver Source

git clone https://github.com/romcyncynatus/rtl8192eu.git

or from

http://support.dlink.com.au/download/download.aspx?product=DWA-131

3. Patch the driver source for Kernel 4.x

cd rtl8192eu

Apply the following patch to rtw_android.c

diff --git a/os_dep/linux/rtw_android.c b/os_dep/linux/rtw_android.c

index 40ddf07..f7c496e 100755

--- a/os_dep/linux/rtw_android.c

+++ b/os_dep/linux/rtw_android.c

@@ -342,7 +342,11 @@ int rtw_android_cmdstr_to_num(char *cmdstr)

{

int cmd_num;

for(cmd_num=0 ; cmd_num<ANDROID_WIFI_CMD_MAX; cmd_num++)

+#if (LINUX_VERSION_CODE >= KERNEL_VERSION(4,0,0))

+ if(0 == strncasecmp(cmdstr , android_wifi_cmd_str[cmd_num], strlen(android_wifi_cmd_str[cmd_num])) )

+#else

if(0 == strnicmp(cmdstr , android_wifi_cmd_str[cmd_num], strlen(android_wifi_cmd_str[cmd_num])) )

+#endif

break;

return cmd_num;

4. Grab the rpi-source tool (to download the Pi kernel source)

wget https://raw.githubusercontent.com/notro/rpi-source/master/rpi-source

chmod +x rpi-source

sudo mv rpi-source /usr/bin/

sudo rpi-source -q --tag-update

5. Install the Pi kernel

sudo rpi-source --skip-gcc

6. Build and install the driver

make ARCH=arm

sudo make ARCH=arm install

sudo bash -c 'echo "options 8192eu rtw_power_mgnt=0 rtw_enusbss=0" > /etc/modprobe.d/8192eu.conf'

modprobe 8192eu

Here's how to get it working under the September 2015 Raspian Jessie running Kernel 4.1.7+ for which I found some similar instructions a helpful starter:

1. Get up to date and ready for compilation

sudo apt-get update

sudo apt-get upgrade

sudo apt-get install build-essential git

2. Grab the Driver Source

git clone https://github.com/romcyncynatus/rtl8192eu.git

or from

http://support.dlink.com.au/download/download.aspx?product=DWA-131

3. Patch the driver source for Kernel 4.x

cd rtl8192eu

Apply the following patch to rtw_android.c

diff --git a/os_dep/linux/rtw_android.c b/os_dep/linux/rtw_android.c

index 40ddf07..f7c496e 100755

--- a/os_dep/linux/rtw_android.c

+++ b/os_dep/linux/rtw_android.c

@@ -342,7 +342,11 @@ int rtw_android_cmdstr_to_num(char *cmdstr)

{

int cmd_num;

for(cmd_num=0 ; cmd_num<ANDROID_WIFI_CMD_MAX; cmd_num++)

+#if (LINUX_VERSION_CODE >= KERNEL_VERSION(4,0,0))

+ if(0 == strncasecmp(cmdstr , android_wifi_cmd_str[cmd_num], strlen(android_wifi_cmd_str[cmd_num])) )

+#else

if(0 == strnicmp(cmdstr , android_wifi_cmd_str[cmd_num], strlen(android_wifi_cmd_str[cmd_num])) )

+#endif

break;

return cmd_num;

4. Grab the rpi-source tool (to download the Pi kernel source)

wget https://raw.githubusercontent.com/notro/rpi-source/master/rpi-source

chmod +x rpi-source

sudo mv rpi-source /usr/bin/

sudo rpi-source -q --tag-update

5. Install the Pi kernel

sudo rpi-source --skip-gcc

6. Build and install the driver

make ARCH=arm

sudo make ARCH=arm install

sudo bash -c 'echo "options 8192eu rtw_power_mgnt=0 rtw_enusbss=0" > /etc/modprobe.d/8192eu.conf'

modprobe 8192eu

Friday 14 November 2014

Tackling Cancer with Machine Learning

For a recent Hack Day at work I spent some time working with one of my colleagues, Adrian Lee, on a little side project to see if we could detect cancer cells in a biopsy image. We've only spent a couple of days on this so far but already the results are looking very promising with each of us working on a distinctly different part of the overall idea.

We held an open day in our department at work last month and I gave a lightening talk on the subject which you can see on YouTube:

There were a whole load of other talks given on the day that can be seen in the summary blog post over on the ETS (Emerging Technology Services) site.

We held an open day in our department at work last month and I gave a lightening talk on the subject which you can see on YouTube:

There were a whole load of other talks given on the day that can be seen in the summary blog post over on the ETS (Emerging Technology Services) site.

Wednesday 22 January 2014

Snooker Cue Maintenance

A slight departure from my usual sort of posts but these are "my notes" so what the heck.

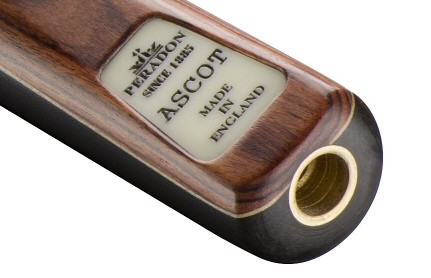

Last Christmas I treated myself (or strictly speaking was treated to) a new snooker cue. I've always dabbled with snooker, not particularly good, but I can do enough to get by. My old cue (18th birthday present) was getting a bit tired. I decided to go with a really nice English make, Peradon, from Liverpool. They are reasonably priced but the real treat is the quality of the craftsmanship and since I'm quite keen on making things from wood I really appreciate that side of the cue as much as anything else - it's clearly one that's far above the level to which I actually play the game. I eventually settled on the Ascot ¾ cue.

With such a nice piece of wood I was surprised to see a complete lack of advice on how to care for and maintain it. I wrote to Peradon for some help and what follows is their advice, and hence the reason for keeping it on my blog so I'll stand a chance of finding it again in the future...

The method is quite simple:

Last Christmas I treated myself (or strictly speaking was treated to) a new snooker cue. I've always dabbled with snooker, not particularly good, but I can do enough to get by. My old cue (18th birthday present) was getting a bit tired. I decided to go with a really nice English make, Peradon, from Liverpool. They are reasonably priced but the real treat is the quality of the craftsmanship and since I'm quite keen on making things from wood I really appreciate that side of the cue as much as anything else - it's clearly one that's far above the level to which I actually play the game. I eventually settled on the Ascot ¾ cue.

With such a nice piece of wood I was surprised to see a complete lack of advice on how to care for and maintain it. I wrote to Peradon for some help and what follows is their advice, and hence the reason for keeping it on my blog so I'll stand a chance of finding it again in the future...

The method is quite simple:

- Rub down with a very fine steel wool (I use Liberon 0000) and wipe away any residue

- Apply raw linseed oil (I use Liberon Raw Linseed Oil) with a lint free cloth and leave for 20-30 minutes

- Buff the cue with a lint free cloth

- Repeat if necessary (you can also heat or dilute the linseed oil for multiple coats)

Tuesday 20 August 2013

Nexus 4 Red Light of Death

I've written a few times in the past about various poor or laughable customer experiences I've had when dealing with technology and the companies making or retailing them. Things usually work out well in the end of course as we're well protected as consumers here in the UK. However, when my Nexus 4 went wrong late on Saturday night I thought I was in for another world of pain, I couldn't have been more wrong.

The short version of this post is that my phone died late on Saturday night. On Sunday morning I raised a support call. By Tuesday morning I had a brand new phone in my hand, delivered to my door, all under warranty. I need not have worried it seems, Google appear to have customer support really very well sorted out. I only wish the vast array of companies out there who are terrible to deal with would learn the lessons of having satisfied customers even when things go wrong.

The slightly longer version of the story is that my phone completely ran out of charge on Saturday and when I went to plug it in for an over night full charge before going to bed, I noticed the LED was solid red. I've never seen this before but left it for a few hours and tried turning it on, nothing. I left it on over night and it still wouldn't turn on the next morning. I tried a few other things, like a separate wall outlet and another charger and cable but still all I got was a solid red light and no ability to boot the phone.

I phoned Google at 10am on a Sunday in the hope that they ran a call center on Sundays or that I would be connected to an international person who would be able to help. I think that's probably what happened as it was an all-American experience from start to finish. Benjamin answered the phone, asked me some questions and got me to do a couple of things with the phone, it was still dead. He was first class, easy to understand and took ownership of the issue straight away, I can still email him directly about the problem now.

After Google support (Ben) realised the phone was dead, there was no quibble, no problem, no hoops to jump through. He told me that he'd send the issue through to the warranty department, they would send me an email with how to order a new phone and when I receive it I should send back the old one in the same packaging (standard practice for the tech industry). We parted company, and I'm thinking this is all a bit too easy and something will go wrong later.

A couple of hours later, I get an email from Google warranty. It has a link to click which allows you to order a new phone at no cost (the link is only live for 24 hours). I set about ordering the phone, it was Sunday night by this time.

8:30am Tuesday morning and Parcel Force knock at the door and deliver my new phone. Inside the package is a return envelope, exactly as described by Ben at Google and exactly what the warranty email said would happen. I printed the RMA note attached to the email, packaged everything up and it's ready to go back to Google - we're still less than 5 days from the start of the issue at this point.

Quite simply, brilliant. I thought I should say so (or more so).

So would I buy a new Google hardware product again? You bet I would. Software updates come regularly, I'm always at the latest Android level (unlike the Transformer Prime tablet we have in the house which is stuck on 4.1 because Asus dropped support after little more than a year), I don't suffer from the Apple single vendor lock-in issue, and now to top it all off it seems the warranty support is first class.

The short version of this post is that my phone died late on Saturday night. On Sunday morning I raised a support call. By Tuesday morning I had a brand new phone in my hand, delivered to my door, all under warranty. I need not have worried it seems, Google appear to have customer support really very well sorted out. I only wish the vast array of companies out there who are terrible to deal with would learn the lessons of having satisfied customers even when things go wrong.

The slightly longer version of the story is that my phone completely ran out of charge on Saturday and when I went to plug it in for an over night full charge before going to bed, I noticed the LED was solid red. I've never seen this before but left it for a few hours and tried turning it on, nothing. I left it on over night and it still wouldn't turn on the next morning. I tried a few other things, like a separate wall outlet and another charger and cable but still all I got was a solid red light and no ability to boot the phone.

I phoned Google at 10am on a Sunday in the hope that they ran a call center on Sundays or that I would be connected to an international person who would be able to help. I think that's probably what happened as it was an all-American experience from start to finish. Benjamin answered the phone, asked me some questions and got me to do a couple of things with the phone, it was still dead. He was first class, easy to understand and took ownership of the issue straight away, I can still email him directly about the problem now.

After Google support (Ben) realised the phone was dead, there was no quibble, no problem, no hoops to jump through. He told me that he'd send the issue through to the warranty department, they would send me an email with how to order a new phone and when I receive it I should send back the old one in the same packaging (standard practice for the tech industry). We parted company, and I'm thinking this is all a bit too easy and something will go wrong later.

A couple of hours later, I get an email from Google warranty. It has a link to click which allows you to order a new phone at no cost (the link is only live for 24 hours). I set about ordering the phone, it was Sunday night by this time.

8:30am Tuesday morning and Parcel Force knock at the door and deliver my new phone. Inside the package is a return envelope, exactly as described by Ben at Google and exactly what the warranty email said would happen. I printed the RMA note attached to the email, packaged everything up and it's ready to go back to Google - we're still less than 5 days from the start of the issue at this point.

Quite simply, brilliant. I thought I should say so (or more so).

So would I buy a new Google hardware product again? You bet I would. Software updates come regularly, I'm always at the latest Android level (unlike the Transformer Prime tablet we have in the house which is stuck on 4.1 because Asus dropped support after little more than a year), I don't suffer from the Apple single vendor lock-in issue, and now to top it all off it seems the warranty support is first class.

Saturday 17 August 2013

Android Clients for Twitter

I've been a long time fan of Tweetdeck as a piece of software for social management, certainly long before it was bought by Twitter. I've used it everywhere via the Air Desktop app, on the web, the Chrome app and on Android too. That is, up until now. In their infinite wisdom Twitter appear to have realised maintaining two clients across a wide range of platforms doesn't make sense (begs the question why the bought it in the first place but I wont have that argument here) any longer and most versions of Tweetdeck are being phased out leaving only the web version or the heavily-based-on-the-web-version Chrome app. So for the fans, what's next? Which Twitter client should I be using on my mobile device now? I've been assessing a bunch of them for quite a while now and I know I'm not the only one facing this decision so here's what I've learned.

First of all to put into perspective my thoughts on each client, here's a list of things I want or that I'm concerned with in an Android client:

So onward, to the apps...

Twitter (official client)

I guess the main critique of a post such as this is to ask what's wrong with the official client. Well for me I find it takes ages to get to the content I read regularly. Twitter lists take 3 taps and a scroll-down to find (for each list) so the official client fails at point 1 in my requirements list. It doesn't do particularly well at points 2 or 3 either. However, it does have the beefed-up mentions column they're calling Interactions that also includes favourites and new followers which I really like so I'm likely to keep it installed just for this until some other clients catch up.

Scope

Requires a special Scope account in order to login. Screenshots appear to indicate a lack of column support so I didn't even bother signing in and got rid of it straight away.

Slices

An interesting take on reading Twitter and may once have provided lots of useful extra functionality on top of the basic Twitter. However, with the introduction of Twitter lists there seems less point to Slices ability to carve up your social content into chunks. The UI I found to be really rather confusing and difficult to navigate. I quickly got rid of it after some investigation into its features.

Carbon

One of the slickest and nicest looking Twitter clients I think there is, this one really excels at want list number 3 with its fancy animations and cool look. However, in the end it's still a button based UI that takes far too many taps to get to each piece of content you want to read. That makes it slow and confusing to navigate so it's a sad farewell when uninstalling this one as it does implement swipe between columns but you can't configure your own columns to swipe through.

Tweet Lanes

This one does everything I want more or less out of the box with zero setup. All I did for this app was sign in and a carousel swipe interface is loaded with all my tweets, mentions, lists, etc. and more can easily be added. This app also appeals due to its free and open source mentality so is good for want list number 6. However, it was left by the original developer (who no longer maintains it) somewhat feature incomplete with no notifications or sync although it does handle multiple accounts rather nicely. Other developers have hacked in a few of these missing features here and there but there's still no driving force behind the project any more. I wouldn't be expecting regular updates so the amount of life left in this app without someone taking up the reigns is probably quite short. If it wasn't for the fact it's in a state of limbo I could seriously like this one but in the end lack of attention to detail such as pressing a notification in the notification bar and it merely starting the app rather than dropping you to the right place are just annoying.

Seesmic

A really popular client at one point, even if it's not now. However, I really didn't get Seesmic at all. It's a button based UI similar to the official Twitter client that takes lots of taps to get anywhere. Lets count how I read a Twitter list: Profile Button -> Lists -> List Name, that's 3 which is too many in my book. So it fails in the same way the official client fails at point 1 in my want list and doesn't do well at points 2 or 3 either. It also has ads that I could pay to get rid of but why pay when it doesn't work very well for me anyway?

UberSocial

Not a bad column-based client that allows you to easily add the columns you want and arrange them in the order that suits. It's easy to switch between accounts too. The big problem with this client is that it's a battery-eater, I've no idea what it's doing but presumably polling quite a lot as when installed and in use on my phone it was one of the top reported apps in terms of battery usage where none of the other clients are anywhere to be seen. If you want decent battery life then don't bother with this one.

TweetCaster

A not particularly easy on the eye button based app which, like other similar apps that limit the columns you're allowed and don't swipe between them, makes it rather awkward to traverse all the various parts of Twitter that a modern user might expect. I really don't see the advantage of TweetCaster over the standard Twitter app.

The Winners Are

These three are all very good indeed and for me and my list, worth a shot. I've not yet completely made my mind up which way I'll be going but it will definitely be one of these.

Janetter

With the default theme, this client can be a bit "oh my eyes" with its jet black text on bright white background. Not much imagination in the colouring and shading of the interface except the bright lime green. However, when switched to the dark theme it's much easier on the eye and you can start to look at the functionality a little more closely. It manages multiple accounts very well, returning you to the same account you left when you start the app but making it easy to move between the two accounts - perfect for when you have a major account and other minor accounts you don't read that much. The interface itself is the typical column/swipe style interface and the columns are easily configured, edited and arranged. It also has another feature that is becoming more important in the management of busy columns, the idea of muting certain keywords, hashtags, user accounts or apps. The free version has adverts built in but if this is the app I end up going for then it's a one-time £4.99 purchase to get rid of the ads and unlock a couple of other features.

HootSuite

Really easy to use from the off and has a nice separation of accounts that allow you to read content from any particular account with ease. Accounts are separated so you can only scroll through the columns for a particular account that's active. However, switching between accounts feels a little awkward to me as you have to remember how to navigate back to the main start page which isn't done using the most obvious (and normal for Android) method of clicking the button in the title bar. Other than that, once you've got an account selected it's really quite nice to browse through all the different content and with no ads either, for free. If you want more than a certain number of accounts (I'm not sure how many) or you want to unlock some particular features (that I've not found the need for) then you need to pay a fairly staggering $9.99 per month to use the pro version of the app. That sum plus the features you get in the paid version clearly indicate HootSuite is aiming to make money from commercial users of Twitter where lots of different people might be maintaining lots of different accounts, a marketing department perhaps. Because of that the free version is really good, as I've said, but I do wonder if one of the other two of my favourite apps will win out with a slicker user experience overall.

Plume

First of all to put into perspective my thoughts on each client, here's a list of things I want or that I'm concerned with in an Android client:

- I should very easily be able to get to different content streams from my main Twitter stream, my mentions, searches, lists, to my direct messages. A column interface (similar to Tweetdeck I guess) is ideal for this with a swipe gesture to navigate between them.

- It should support multiple Twitter accounts and switch easily between each account.

- It should be slick, fast, nice to use and be configurable in terms of its look and feel, notifications and ideally offer per column filters.

- It should ideally support push notifications and Twitter streaming. When notifications are pressed in the notifications bar the client should open directly to the Tweet being notified.

- It should not contain adverts. It doesn't matter if I have to pay for the app or pay to get rid of the adverts, paying (within reason) is fine.

- It would preferably be free (as in open source) but I appreciate many of them are not and indeed to get the best Android clients at the moment it's less likely to be so.

So onward, to the apps...

Twitter (official client)

I guess the main critique of a post such as this is to ask what's wrong with the official client. Well for me I find it takes ages to get to the content I read regularly. Twitter lists take 3 taps and a scroll-down to find (for each list) so the official client fails at point 1 in my requirements list. It doesn't do particularly well at points 2 or 3 either. However, it does have the beefed-up mentions column they're calling Interactions that also includes favourites and new followers which I really like so I'm likely to keep it installed just for this until some other clients catch up.

Scope

Requires a special Scope account in order to login. Screenshots appear to indicate a lack of column support so I didn't even bother signing in and got rid of it straight away.

Slices

An interesting take on reading Twitter and may once have provided lots of useful extra functionality on top of the basic Twitter. However, with the introduction of Twitter lists there seems less point to Slices ability to carve up your social content into chunks. The UI I found to be really rather confusing and difficult to navigate. I quickly got rid of it after some investigation into its features.

Carbon

One of the slickest and nicest looking Twitter clients I think there is, this one really excels at want list number 3 with its fancy animations and cool look. However, in the end it's still a button based UI that takes far too many taps to get to each piece of content you want to read. That makes it slow and confusing to navigate so it's a sad farewell when uninstalling this one as it does implement swipe between columns but you can't configure your own columns to swipe through.

Tweet Lanes

This one does everything I want more or less out of the box with zero setup. All I did for this app was sign in and a carousel swipe interface is loaded with all my tweets, mentions, lists, etc. and more can easily be added. This app also appeals due to its free and open source mentality so is good for want list number 6. However, it was left by the original developer (who no longer maintains it) somewhat feature incomplete with no notifications or sync although it does handle multiple accounts rather nicely. Other developers have hacked in a few of these missing features here and there but there's still no driving force behind the project any more. I wouldn't be expecting regular updates so the amount of life left in this app without someone taking up the reigns is probably quite short. If it wasn't for the fact it's in a state of limbo I could seriously like this one but in the end lack of attention to detail such as pressing a notification in the notification bar and it merely starting the app rather than dropping you to the right place are just annoying.

Seesmic

A really popular client at one point, even if it's not now. However, I really didn't get Seesmic at all. It's a button based UI similar to the official Twitter client that takes lots of taps to get anywhere. Lets count how I read a Twitter list: Profile Button -> Lists -> List Name, that's 3 which is too many in my book. So it fails in the same way the official client fails at point 1 in my want list and doesn't do well at points 2 or 3 either. It also has ads that I could pay to get rid of but why pay when it doesn't work very well for me anyway?

UberSocial

Not a bad column-based client that allows you to easily add the columns you want and arrange them in the order that suits. It's easy to switch between accounts too. The big problem with this client is that it's a battery-eater, I've no idea what it's doing but presumably polling quite a lot as when installed and in use on my phone it was one of the top reported apps in terms of battery usage where none of the other clients are anywhere to be seen. If you want decent battery life then don't bother with this one.

TweetCaster

A not particularly easy on the eye button based app which, like other similar apps that limit the columns you're allowed and don't swipe between them, makes it rather awkward to traverse all the various parts of Twitter that a modern user might expect. I really don't see the advantage of TweetCaster over the standard Twitter app.

The Winners Are

These three are all very good indeed and for me and my list, worth a shot. I've not yet completely made my mind up which way I'll be going but it will definitely be one of these.

Janetter

With the default theme, this client can be a bit "oh my eyes" with its jet black text on bright white background. Not much imagination in the colouring and shading of the interface except the bright lime green. However, when switched to the dark theme it's much easier on the eye and you can start to look at the functionality a little more closely. It manages multiple accounts very well, returning you to the same account you left when you start the app but making it easy to move between the two accounts - perfect for when you have a major account and other minor accounts you don't read that much. The interface itself is the typical column/swipe style interface and the columns are easily configured, edited and arranged. It also has another feature that is becoming more important in the management of busy columns, the idea of muting certain keywords, hashtags, user accounts or apps. The free version has adverts built in but if this is the app I end up going for then it's a one-time £4.99 purchase to get rid of the ads and unlock a couple of other features.

HootSuite

Really easy to use from the off and has a nice separation of accounts that allow you to read content from any particular account with ease. Accounts are separated so you can only scroll through the columns for a particular account that's active. However, switching between accounts feels a little awkward to me as you have to remember how to navigate back to the main start page which isn't done using the most obvious (and normal for Android) method of clicking the button in the title bar. Other than that, once you've got an account selected it's really quite nice to browse through all the different content and with no ads either, for free. If you want more than a certain number of accounts (I'm not sure how many) or you want to unlock some particular features (that I've not found the need for) then you need to pay a fairly staggering $9.99 per month to use the pro version of the app. That sum plus the features you get in the paid version clearly indicate HootSuite is aiming to make money from commercial users of Twitter where lots of different people might be maintaining lots of different accounts, a marketing department perhaps. Because of that the free version is really good, as I've said, but I do wonder if one of the other two of my favourite apps will win out with a slicker user experience overall.

Plume

I'm really liking Plume at the moment, along with Janetter it's quite slick at the way it handles navigation and moving between accounts. However, it does have a couple of annoying "features" that can't be changed. The worst is that every time the app is started it shows a feed of information from all of your accounts configured in the app. Unlike most apps, Plume doesn't separate content from different accounts, they're all shown in the same columns and colour coded to match a particular account. That's great except that most of the time I want to read content from my main Twitter account and only occasionally do I want to dip into content from other accounts. Hence, at the moment every time I start the app I have to tap a couple of buttons to select my main account - how hard can that be to add as an option? Many users have asked for it and as yet it's not been added. The free version is supported by ads so you you need to shell out the small sum of £3.73 to get rid of them. Another similar feature to Janetter is that you can mute certain things from column content, either accounts, hashtags, keywords or apps. This highlights another annoying Plume buglet in that not everything you ask to be muted actually gets muted, or at least it seems mutes only apply to the main column and not to specific columns or all columns as you might expect. This is a really excellent Twitter client and if you can work around the few annoyances I've highlighted then it's a real winner. For me, time will tell whether they sort it out enough for me to be able to live with it on a daily basis.

Tuesday 25 June 2013

Machine Learning Course

Enough time has passed since I undertook the Stanford University Natural Language Processing Course for me to forget just how much hard work it was for me to start all over again. This year I decided to have a go at the coursera Machine Learning Course.

Unlike the 12 week NLP course last year which estimated 10 hours a week and turned out to be more like 15-20 hours a week, this course was much more realistic in estimation at 10 weeks of 8 hours. I think I more or less hit the mark on that point spending about 1 day every week for the past 10 weeks studying machine learning - so around half the time required for the NLP course.

The course was written and presented by Andrew Ng who seems to be rather prolific and somewhat of an academic star in his fields of machine learning and artificial intelligence. He is one of the co-founders of the coursera site which along with their main rival, Udacity, have brought about the popular rise of Massive Open Online Learning.

The Machine Learning Course followed the same format as the NLP course from last year which I can only assume is the standard coursera format, at least for technical courses anyway. Each week there were 1 or two main topic areas to study which were presented in a series of videos featuring Andrew talking through a set of slides on which he's able to hand write notes for demonstration purposes, just as if you're sitting in a real lecture hall at university. To check your understanding of the content of the videos there are questions which must be answered on each topic against which you're graded. The second main component each week is a programming exercise which for the Machine Learning Course must be completed in Octave - so yet another programming language to add to your list. Achieving a mark of 80% or above across all the questions and programming exercises results in a course pass. I appear to have done that with relative ease for this course.

The 18 topics covered were:

The major thought behind the course seems to be to teach as many different algorithms as possible. There really is a great range. Starting of simply with linear algorithms and progressing right up to the current state-of-the-art Neural Networks and the ever fashionable map-reduce stuff.

I didn't find the course terribly difficult, I'm no expert in any of the topics but have studied enough maths not to struggle with that side of things and don't struggle with programming either. I didn't need to use the forums or any of the other social elements offered during the course so I don't really have a feel for how others found the course. I can certainly imagine someone finding it a real struggle if they don't have a particularly deep background in either maths or programming.

There was, as far as I can think right now, one (or maybe two depending on how you count) omission from the course. Most of the programming exercises were heavily frameworked for you in advance, you just have to fill in the gaps. This is great for learning the various different algorithms presented during the course but does leave a couple of areas at the end of the course you're not so confident with (aside from not really having a wide grasp of the Octave programming language). The omission of which I speak is that of storing and bootstrapping the models you've trained with the algorithm. All the exercises concentrated on training a model, storing it in memory, using it and as the program terminates then so your model disappears. It would have been great to have another module on the best ways to persist models between program runs, and how to continue training (bootstrap) a model that you have already persisted. I'll feed that thought back to Andrew when the opportunity arises over the next couple of weeks.

The problem going forward wont so much be applying what has been offered here but working out what to apply it to. The range of problems that can be tackled with these techniques is mind-blowing, just look at the rise of analytics we're seeing in all areas of business and technology.

Overall then, a really nice introduction into the world of machine learning. Recommended!

Unlike the 12 week NLP course last year which estimated 10 hours a week and turned out to be more like 15-20 hours a week, this course was much more realistic in estimation at 10 weeks of 8 hours. I think I more or less hit the mark on that point spending about 1 day every week for the past 10 weeks studying machine learning - so around half the time required for the NLP course.

The course was written and presented by Andrew Ng who seems to be rather prolific and somewhat of an academic star in his fields of machine learning and artificial intelligence. He is one of the co-founders of the coursera site which along with their main rival, Udacity, have brought about the popular rise of Massive Open Online Learning.

The Machine Learning Course followed the same format as the NLP course from last year which I can only assume is the standard coursera format, at least for technical courses anyway. Each week there were 1 or two main topic areas to study which were presented in a series of videos featuring Andrew talking through a set of slides on which he's able to hand write notes for demonstration purposes, just as if you're sitting in a real lecture hall at university. To check your understanding of the content of the videos there are questions which must be answered on each topic against which you're graded. The second main component each week is a programming exercise which for the Machine Learning Course must be completed in Octave - so yet another programming language to add to your list. Achieving a mark of 80% or above across all the questions and programming exercises results in a course pass. I appear to have done that with relative ease for this course.

The 18 topics covered were:

- Introduction

- Linear Regression with One Variable

- Linear Algebra Review

- Linear Regression with Multiple Variables

- Octave Tutorial

- Logistic Regression

- Regularisation

- Neural Networks Representation

- Neural Networks Learning

- Advice for Applying Machine Learning

- Machine Learning System Design

- Support Vector Machines

- Clustering

- Dimensionality Reduction

- Anomaly Detection

- Recommender Systems

- Large Scale Machine Learning

- Application Example Photo OCR

The major thought behind the course seems to be to teach as many different algorithms as possible. There really is a great range. Starting of simply with linear algorithms and progressing right up to the current state-of-the-art Neural Networks and the ever fashionable map-reduce stuff.

I didn't find the course terribly difficult, I'm no expert in any of the topics but have studied enough maths not to struggle with that side of things and don't struggle with programming either. I didn't need to use the forums or any of the other social elements offered during the course so I don't really have a feel for how others found the course. I can certainly imagine someone finding it a real struggle if they don't have a particularly deep background in either maths or programming.

There was, as far as I can think right now, one (or maybe two depending on how you count) omission from the course. Most of the programming exercises were heavily frameworked for you in advance, you just have to fill in the gaps. This is great for learning the various different algorithms presented during the course but does leave a couple of areas at the end of the course you're not so confident with (aside from not really having a wide grasp of the Octave programming language). The omission of which I speak is that of storing and bootstrapping the models you've trained with the algorithm. All the exercises concentrated on training a model, storing it in memory, using it and as the program terminates then so your model disappears. It would have been great to have another module on the best ways to persist models between program runs, and how to continue training (bootstrap) a model that you have already persisted. I'll feed that thought back to Andrew when the opportunity arises over the next couple of weeks.

The problem going forward wont so much be applying what has been offered here but working out what to apply it to. The range of problems that can be tackled with these techniques is mind-blowing, just look at the rise of analytics we're seeing in all areas of business and technology.

Overall then, a really nice introduction into the world of machine learning. Recommended!

Sunday 2 June 2013

Making a Cajón

When I asked my best mate what he wanted for his birthday this year he came back with something rather unexpected, he said "I'd really like a Cajón!". Having never heard of one before he continued to explain what it was and I looked it up a bit later too. It turned out that for the sort of thing he wanted, something with an electrical pick-up (to make it semi-acoustic) with an adjustable snare too, it was a bit out of budget. After a bit of research around various different makes and models I wondered how hard it could really be (it's just a wooden box after all) and offered to make one. Matt quickly warmed to the idea and so with his knowledge of what he wanted in the way of design and my woodworking experience we set about a joint project that we've just finished this weekend.

To save the long blog post about exactly what we did, I'll simply refer you to a video (below). This is more or less exactly what we made, following Steve Ramsey's design almost to the letter. There were a few things we had to make up that the video didn't explain very well and a couple of design adjustments (where we found the video to be incorrect - we weren't the only one's to notice the problem).

The main departure from Steve's design in the video was the inclusion of an electric pick-up. However, we didn't depart from Steve's advice and just followed his design for an electric pick-up using a piezo transducer and a 6mm jack socket soldered together as can be seen from about 4:30 in the video for his stomp box.

We took pictures all the way through which can be seen in chronological order in my Flickr set or via the slideshow at the bottom of this post. We started off with a bunch of different stuff we needed to work up. Here's Matt with the cheesy-grinned first picture before we got started, posing with the various bits and pieces:

More or less everything we used is there in the picture above:

- 4' x 2' x ¾" birch faced ply sheet (for the top, bottom and sides)

- 3mm ply (for the front piece, called the tapa)

- 25mm dowel rod

- piezo transducer and 6mm jack socket

- 4 speaker feet

- Snare wire

- 2 knobs, m6 40mm long thread

- Clear wax

- Glue

On the first afternoon's work, the birch ply was cut to size and rebated to form a box shape, albeit not yet glued together:

This was actually the main part of the work we had to do. The next time we got together we modified the back panel so it had a large hole in it (to let the sound out) and a fitting for the jack socket to be screwed through. After that came the tricky business of fitting the adjustable snare dowel rod mechanism to the sides which can best be seen in a couple of different pictures. Once all that was done we were able to glue it all together and left it clamped up for a couple of days to dry, the result was a completed box:

Finally, we cut the front to size and fitted that, waxed the whole thing then fitted the feet and jack socket. We gave it a few different tests. First was to sit on it (since that's how they're played) and it survived that, then Matt had his first play on it in my garage followed by heading in doors to hook it up to the stereo in order to test the semi-accoustic-ness off it. Everything worked well.

We're both really pleased with it. It's really solidly constructed and feels like it should last a good many years use. All the tweaks to a basic Cajón design work really well including the adjustable snare and the electric pick-up. It looks really good too, we were really lucky to source such a nice looking piece of ply (thanks to my cousin's at Ascot Timer Buildings), finished it off with nicely rounded corners and a good quality clear wax. Of course, the really important bit is the sound and fortunately it performs on that front too (better than I'd expected). The base notes from the middle sound really deep and can be quite loud if you're really going for it and they graduate to a nice high pitch as you move towards playing at the sides. When turned on, the snare adds an extra dimension when hitting near the top too.

So, it's happy birthday to Matt (a wee bit late since we started making it just after his birthday). There have been loads of people interested in the project as we've bee going through so I'm sure he's going to be a busy boy showing it off all over the place now.

I'll close out with the slideshow and another mention of thanks to Steve Ramsey for his excellent video tutorial.

Friday 17 May 2013

I'd Like to Fix Your Computer

I've heard of other people receiving spam phone calls from dodgy companies claiming to offer free help and advice to check and fix problems with your PC. I just got my first one and had a bit of fun with it.

For reference, the number that called me was 01827 880580 and the company claimed to be called "PC Wizard". Google searching that telephone number only results in finding pages of blacklisted numbers. I also called the number back and it doesn't connect.

The call started with an Indian sounding woman on the other end of the line who asked me to start my PC and tell her when it was ready. I responded instantly saying that I was at the computer and it was on. That was the only introduction we had, she offered no name for herself only that her company is called "PC Wizard".

Next she proceeded to tell me I had a control button on the keyboard and next to that is a windows button. She asked me to press that and keep it held down and press the R button. Now even I, as a non Windows user, know that brings up the run dialog. When I was asked what can I see I said I had a prompt to run a command. She asked what it contained and I said it was empty.

She asked me to type in eventvwr and asked what I could see. I said it had brought up a window after I clicked OK but that window was empty and I couldn't see anything at all. She double-checked that I had indeed pressed the OK button and then sounded rather confused and said she was going to pass me over to one of the senior technicians (I'm shaking in my boots now, it must be *really* bad).

Some guy (also Indian sounding) came onto the phone and wanted to take me through the same steps. I asked him why he was calling and how he got my number. He just quoted the same rubbish saying he was from "PC Wizard" and was the senior technical advisor, going to help me fix my Windows PC. At this point I came clean and said that actually I'm not running Windows and I don't have a Start button, he gave a confused response (bet he's rehearsed that before). I told him I was actually running Linux and was about to quote the data protection act to state he should not contact me again and delete my details, not that I would expect that to do a lot of good.

As soon as he heard the word Linux, he hung up. I tried anonymously calling the number back but it doesn't connect.

It seems this is an old scam and I really do feel sorry for the people who have been caught out.

For reference, the number that called me was 01827 880580 and the company claimed to be called "PC Wizard". Google searching that telephone number only results in finding pages of blacklisted numbers. I also called the number back and it doesn't connect.

The call started with an Indian sounding woman on the other end of the line who asked me to start my PC and tell her when it was ready. I responded instantly saying that I was at the computer and it was on. That was the only introduction we had, she offered no name for herself only that her company is called "PC Wizard".

Next she proceeded to tell me I had a control button on the keyboard and next to that is a windows button. She asked me to press that and keep it held down and press the R button. Now even I, as a non Windows user, know that brings up the run dialog. When I was asked what can I see I said I had a prompt to run a command. She asked what it contained and I said it was empty.

She asked me to type in eventvwr and asked what I could see. I said it had brought up a window after I clicked OK but that window was empty and I couldn't see anything at all. She double-checked that I had indeed pressed the OK button and then sounded rather confused and said she was going to pass me over to one of the senior technicians (I'm shaking in my boots now, it must be *really* bad).

Some guy (also Indian sounding) came onto the phone and wanted to take me through the same steps. I asked him why he was calling and how he got my number. He just quoted the same rubbish saying he was from "PC Wizard" and was the senior technical advisor, going to help me fix my Windows PC. At this point I came clean and said that actually I'm not running Windows and I don't have a Start button, he gave a confused response (bet he's rehearsed that before). I told him I was actually running Linux and was about to quote the data protection act to state he should not contact me again and delete my details, not that I would expect that to do a lot of good.

As soon as he heard the word Linux, he hung up. I tried anonymously calling the number back but it doesn't connect.

It seems this is an old scam and I really do feel sorry for the people who have been caught out.

Monday 22 April 2013

Speech to Text

Apologies to the tl;dr brigade, this is going to be a long one...

For a number of years I've

been quietly working away with IBM research on our speech to text

programme. That is, working with a set of algorithms that ultimately

produce a system capable of listening to human speech and

transcribing it into text. The concept is simple, train a system for

speech to text - speech goes in, text comes out. However, the

process and algorithms to do this are extremely complicated from just

about every way you look at it – computationally, mathematically,

operationally, evaluationally, time and cost. This is a completely

separate topic and area of research from the similar sounding text to

speech systems that take text (such as this blog) and read it aloud

in a computerised voice.

Whenever I talk to people

about it they always appear fascinated and want to know more. The

same questions often come up. I'm going to address some of these

here in a generic way and leaving out those that I'm unable to talk

about here. I should also point out that I'm by no means a speech

expert or linguist but have developed enough of an understanding to

be dangerous in the subject matter and that (I hope) allows me to

explain things in a way that others not familiar with the field are

able to understand. I'm deliberately not linking out to the various

research topics that come into play during this post as the list

would become lengthy very quickly and this isn't a formal paper after

all, Internet searches are your friend if you want to know more.

I didn't know IBM did

that?

OK so not strictly a

question but the answer is yes, we do. We happen to be pretty good

at it as well. However, we typically use a company called Nuance as

our preferred partner.

People have often heard of

IBM's former product in this area called Via Voice for their desktop

PCs which was available until the early 2000's. This sort of

technology allowed a single user to speak to their computer for

various different purposes and required the user to spend some time

training the software before it would understand their particular

voice. Today's speech software has progressed beyond this to systems

that don't require any training by the user before they use it.

Current systems are trained in advance in order to attempt to

understand any voice.

What's required?

Assuming you have the

appropriate software and the hardware required to run it on then you

need three more things to build a speech to text system: audio,

transcripts and a phonetic dictionary of pronunciations. This sounds

quite simple but when you dig under the covers a little you realise

it's much more complicated (not to mention expensive) and the devil

is very much in the detail.

On the audial side you'll

need a set of speech recordings. If you want to evaluate your system

after it has been trained then a small sample of these should be kept

to one side and not used during the training process. This set of

audio used for evaluation is usually termed the held out set. It's

considered cheating if you later evaluate the system using audio that

was included in the training process – since the system has already

“heard” this audio before it would have a higher chance of

accurately reproducing it later. The creation of the held out set

leads to two sets of audio files, the held out set and the majority

of the audio that remains which is called the training set.

The audio can be in any

format your training software is compatible with but wave files are

commonly used. The quality of the audio both in terms of the digital

quality (e.g. sample rate) as well as the quality of the speaker(s)

and the equipment used for the recordings will have a direct bearing

on the resulting accuracy of the system being trained. Simply put,

the better quality you can make the input, the more accurate the

output will be. This leads to another bunch of questions such as but

not limited to “What quality is optimal?”, “What should I get

the speakers to say?”, “How should I capture the recordings?” -

all of which are research topics in their own right and for which

there is no one-size-fits-all answer.

Capturing the audio is one

half of the battle. The next piece in the puzzle is obtaining well

transcribed textual copies of that audio. The transcripts should

consist of a set of text representing what was said in the audio as

well as some sort of indication of when during the audio a speaker

starts speaking and when they stop. This is usually done on a

sentence by sentence basis, or for each utterance as they are known.

These transcripts may have a certain amount of subjectivity

associated with them in terms of where the sentence boundaries are

and potentially exactly what was said if the audio wasn't clear or

slang terms were used. They can be formatted in a variety of

different ways and there are various standard formats for this

purpose from an XML DTD through to CSV.

If it has not already

become clear, creating the transcription files can be quite a skilled

and time consuming job. A typical industry expectation is that it

takes approximately 10 man-hours for a skilled transcriber to produce

1 hour of well formatted audio transcription. This time plus the

cost of collecting the audio in the first place is one of the factors

making speech to text a long, hard and expensive process. This is

particularly the case when put into context that most current

commercial speech systems are trained on at least 2000+ hours of

audio with the minimum recommended amount being somewhere in the

region of 500+ hours.

Finally, a phonetic

dictionary must either be obtained or produced that contains at least

one pronunciation variant for each word said across the entire corpus

of audio input. Even for a minimal system this will run into tens of

thousands of words. There are of course, already phonetic

dictionaries available such as the Oxford English Dictionary that

contains a pronunciation for each word it contains. However, this

would only be appropriate for one regional accent or dialect without

variation. Hence, producing the dictionary can also be a long and

skilled manual task.

What does the software

do?

The simple answer is that

it takes audio and transcript files and passes them through a set of

really rather complicated mathematical algorithms to produce a model

that is particular to the input received. This is the training

process. Once system has been trained the model it generates can be

used to take speech input and produce text output. This is the

decoding process. The training process requires lots of data and is

computationally expensive but the model it produces is very small and

computationally much less expensive to run. Today's models are

typically able to perform real-time (or faster) speech to text

conversion on a single core of a modern CPU. It is the model and

software surrounding the model that is the piece exposed to users of

the system.

Various different steps

are used during the training process to iterate through the different

modelling techniques across the entire set of training audio provided

to the trainer. When the process first starts the software knows

nothing of the audio, there are no clever boot strapping techniques

used to kick-start the system in a certain direction or pre-load it

in any way. This allows the software to be entirely generic and work

for all sorts of different languages and quality of material.

Starting in this way is known as a flat start or context independent

training. The software simply chops up the audio into regular

segments to start with and then performs several iterations where

these boundaries are shifted slightly to match the boundaries of the

speech in the audio more closely.

The next phase is context

dependent training. This phase starts to make the model a little

more specific and tailored to the input being given to the trainer.

The pronunciation dictionary is used to refine the model to produce

an initial system that could be used to decode speech into text in

its own right at this early stage. Typically, context dependent

training, while an iterative process in itself, can also be run

multiple times in order to hone the model still further.

Another optimisation that

can be made to the model after context dependent training is to apply

vocal tract length normalisation. This works on the theory that the

audibility of human speech correlates to the pitch of the voice, and

the pitch of the voice correlates to the vocal tract length of the

speaker. Put simply, it's a theory that says men have low voices and

women have high voices and if we normalise the wave form for all

voices in the training material to have the same pitch (i.e. same

vocal tract length) then audibility improves. To do this an

estimation of the vocal tract length must first be made for each

speaker in the training data such that a normalisation factor can be

applied to that material and the model updated to reflect the change.

The model can be thought

of as a tree although it's actually a large multi-dimensional matrix.

By reducing the number of dimensions in the matrix and applying

various other mathematical operations to reduce the search space the

model can be further improved upon both in terms of accuracy, speed

and size. This is generally done after vocal tract length

normalisation has taken place.

Another tweak that can be

made to improve the model is to apply what we call discriminative

training. For this step the theory goes along the lines that all of

the training material is decoded using the current best model

produced from the previous step. This produces a set of text files.

These text files can be compared with those produced by the human

transcribers and given to the system as training material. The

comparison can be used to inform where the model can be improved and

these improvements applied to the model. It's a step that can

probably be best summarised by learning from its mistakes, clever!

Finally, once the model

has been completed it can be used with a decoder that knows how to

understand that model to produce text given an audio input. In

reality, the decoders tend to operate on two different models. The

audio model for which the process of creation has just been roughly

explained; and a language model. The language model is simply a

description of how language is used in the specific context of the

training material. It would, for example, attempt to provide insight

into which words typically follow which other words via the use of

what natural language processing experts call n-grams. Obtaining

information to produce the language model is much easier and does not

necessarily have to come entirely from the transcripts used during

the training process. Any text data that is considered

representative of the speech being decoded could be useful. For

example, in an application targeted at decoding BBC News readers then

articles from the BBC news web site would likely prove a useful

addition to the language model.

How accurate is it?

This

is probably the most common question about these systems and one of

the most complex to answer. As with most things in the world of high

technology it's not simple, so the answer is the infamous “it

depends”. The short answer is that in ideal circumstances the

software can perform at near human levels of accuracy which equates

to in excess of 90% accuracy levels. Pretty good you'd think. It

has been shown that human performance is somewhere in excess of 90%

and is almost never 100% accuracy. The test for this is quite

simple, you get two (or more) people to independently transcribe some

speech and compare the results from each speaker, almost always there

will be a disagreement about some part of the speech (if there's

enough speech that is).

It's

not often that ideal circumstances are present or can even

realistically be achieved. Ideal would be transcribing a speaker

with a similar voice and accent to those which have been trained into

the model and they would speak at the right speed (not too fast and

not too slowly) and they would use a directional microphone that

didn't do any fancy noise cancellation, etc. What people are

generally interested in is the real-world situation, something along

the lines of “if I speak to my phone, will it understand me?”.

This sort of real-world environment often includes background noise

and a very wide variety of speakers potentially speaking into a

non-optimal recording device. Even this can be a complicated answer

for the purposes of accuracy. We're talking about free,

conversational style, speech in this blog post and there's a huge

different in recognising any and all words versus recognising a small

set of command and control words for if you wanted your phone to

perform a specific action. In conclusion then, we can only really

speak about the art of the possible and what has been achieved

before. If you want to know about accuracy for your particular

situation and your particular voice on your particular device then

you'd have to test it!

What words can it

understand? What about slang?

The range of understanding

of a speech to text system is dependent on the training material. At

present, the state of the art systems are based on dictionaries of

words and don't generally attempt to recognise new words for which an

entry in the dictionary has not been found (although these types of

systems are available separately and could be combined into a speech

to text solution if necessary). So the number and range of words

understood by a speech to text system is currently (and I'm

generalising here) a function of the number and range of words used

in the training material. It doesn't really matter what these words

are, whether they're conversational and slang terms or proper

dictionary terms, so long as the system was trained on those then it

should be able to recognise them again during a decode.

Updates and Maintenance

For

the more discerning reader, you'll have realised by now a fundamental

flaw in the plan laid out thus far. Language changes over time,

people use new words and the meaning of words changes within the

language we use. Text-speak is one of the new kids on the block in

this area. It would be extremely cumbersome to need to train an

entire new model each time you wished to update your previous one in

order to include some set of new language capability. The models

produced are able to be modified and updated with these changes

without the need to go back to a full standing start and training

from scratch all over again. It's possible to take your existing

model built from the set of data you had available at a particular

point in time and use this to bootstrap the creation of a new model

which will be enhanced with the new materials that you've gathered

since training the first model. Of course, you'll want to test and

compare both models to check that you have in fact enhanced

performance as you were expecting. This type of maintenance and

update to the model will be required to any and all of these types of

systems as they're currently designed as the structure and usage of

our languages evolve.

Conclusion

OK,

so not necessarily a blog post that was ever designed to draw a

conclusion but I wanted to wrap up by saying that this is an area of

technology that is still very much in active research and

development, and has been so for at least 40-50 years or more!

There's a really interesting statistic I've seen in the field that

says if you ask a range of people involved in this topic the answer

to the question “when will speech to text become a reality” then

the answer generally comes out at “in ten years time”. This

question has been asked consistently over time and the answer has

remained the same. It seems then, that either this is a really hard

nut to crack or that our expectations of such a system move on over

time. Either way, it seems there will always be something new just

around the corner to advance us to the next stage of speech

technologies.

Subscribe to:

Posts (Atom)